From Idea to Production in Minutes

Neo is an AI development platform with 62 specialized agents that build complete applications from a single prompt. iOS, Android, Web, Games, APIs, and more. Deploy to 13 platforms including AWS, GCP, and Azure.

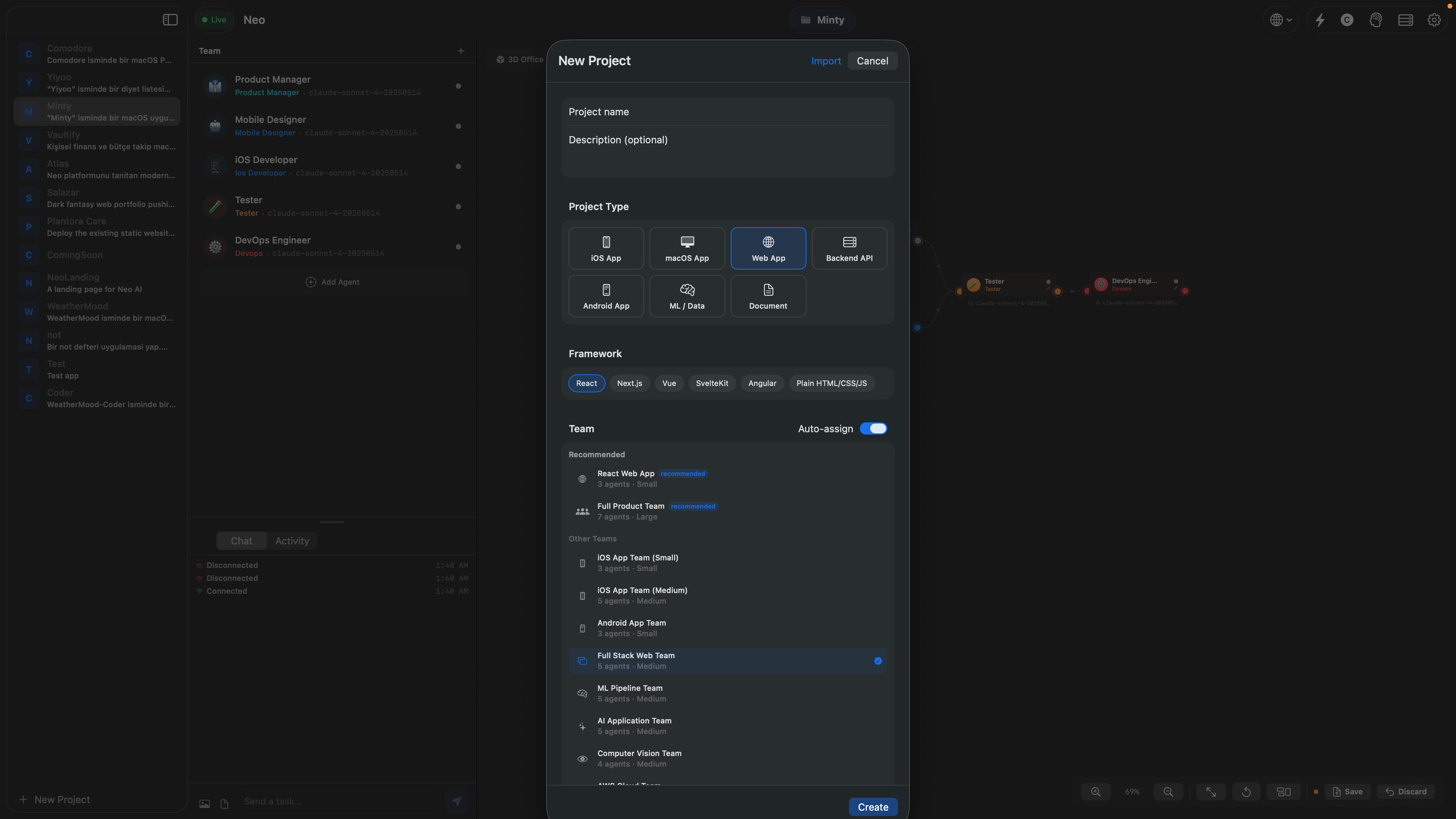

How It Works

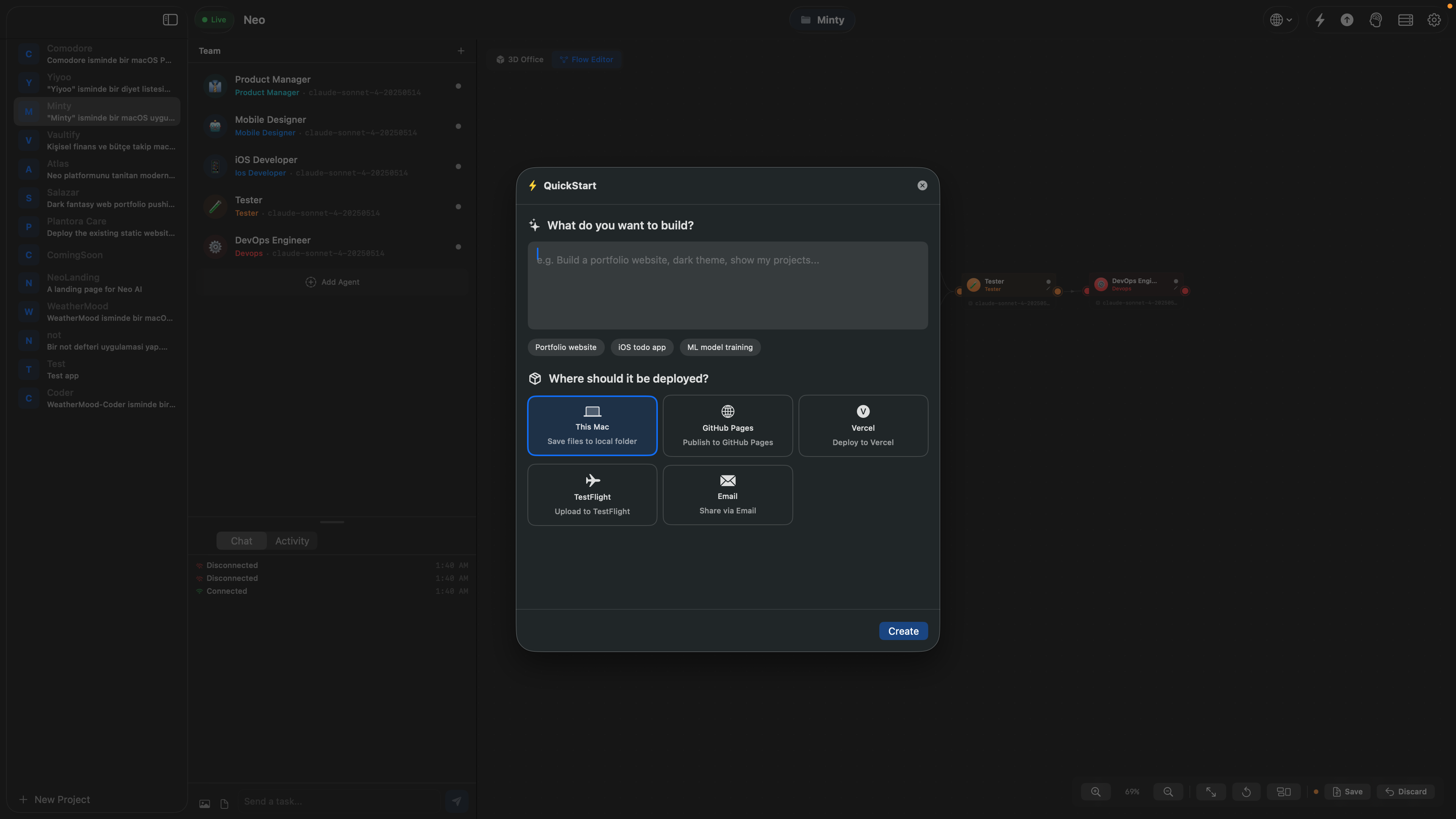

Three steps. That's it. Describe your idea and Neo handles the rest.

Describe Your App

Write a natural language prompt describing your idea. Be as detailed or as brief as you want.

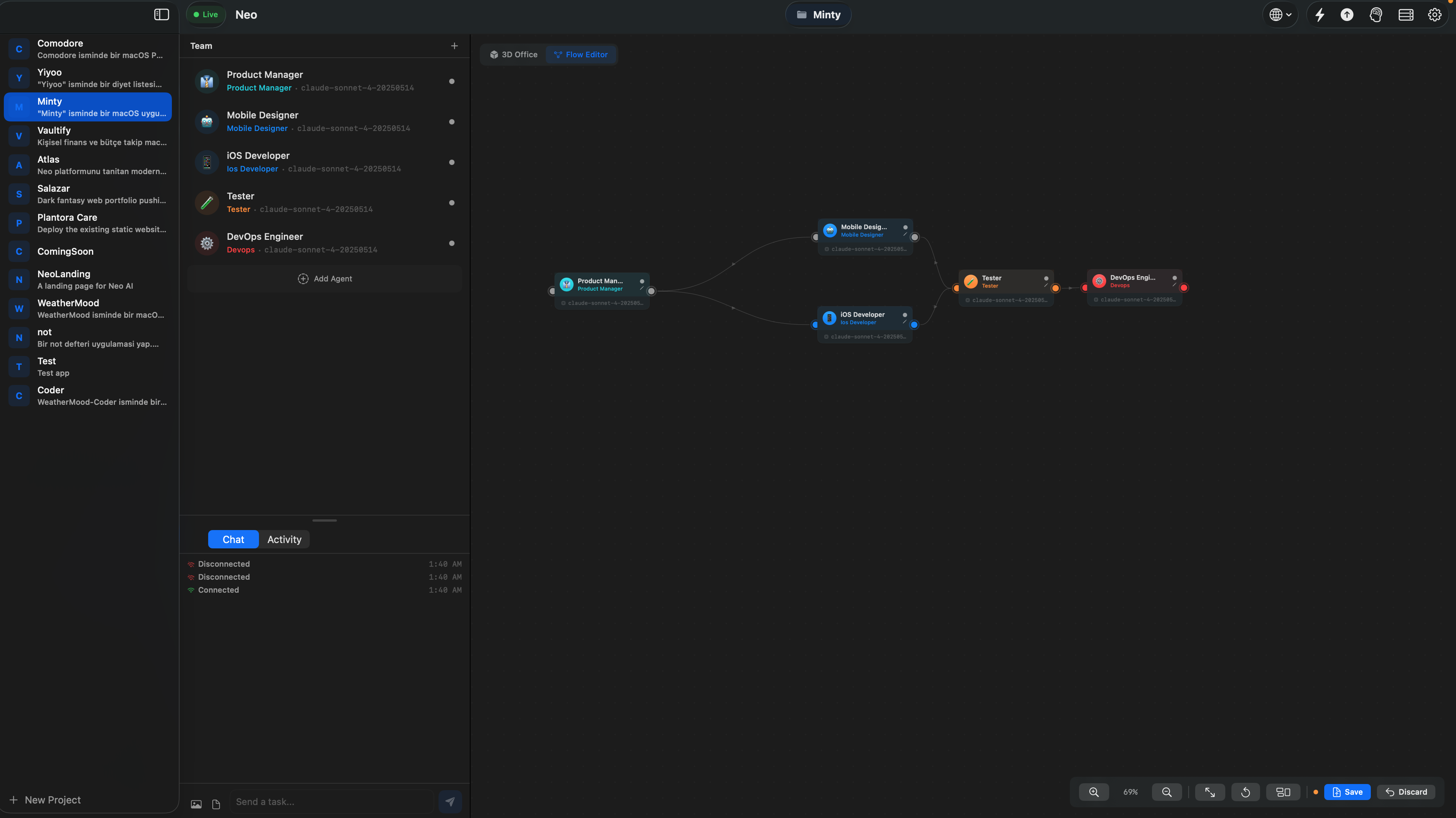

AI Agents Build It

A team of specialized AI agents designs, codes, tests, and reviews your project automatically.

Deploy Anywhere

One-click deployment to App Store, Google Play, Vercel, or your own servers.

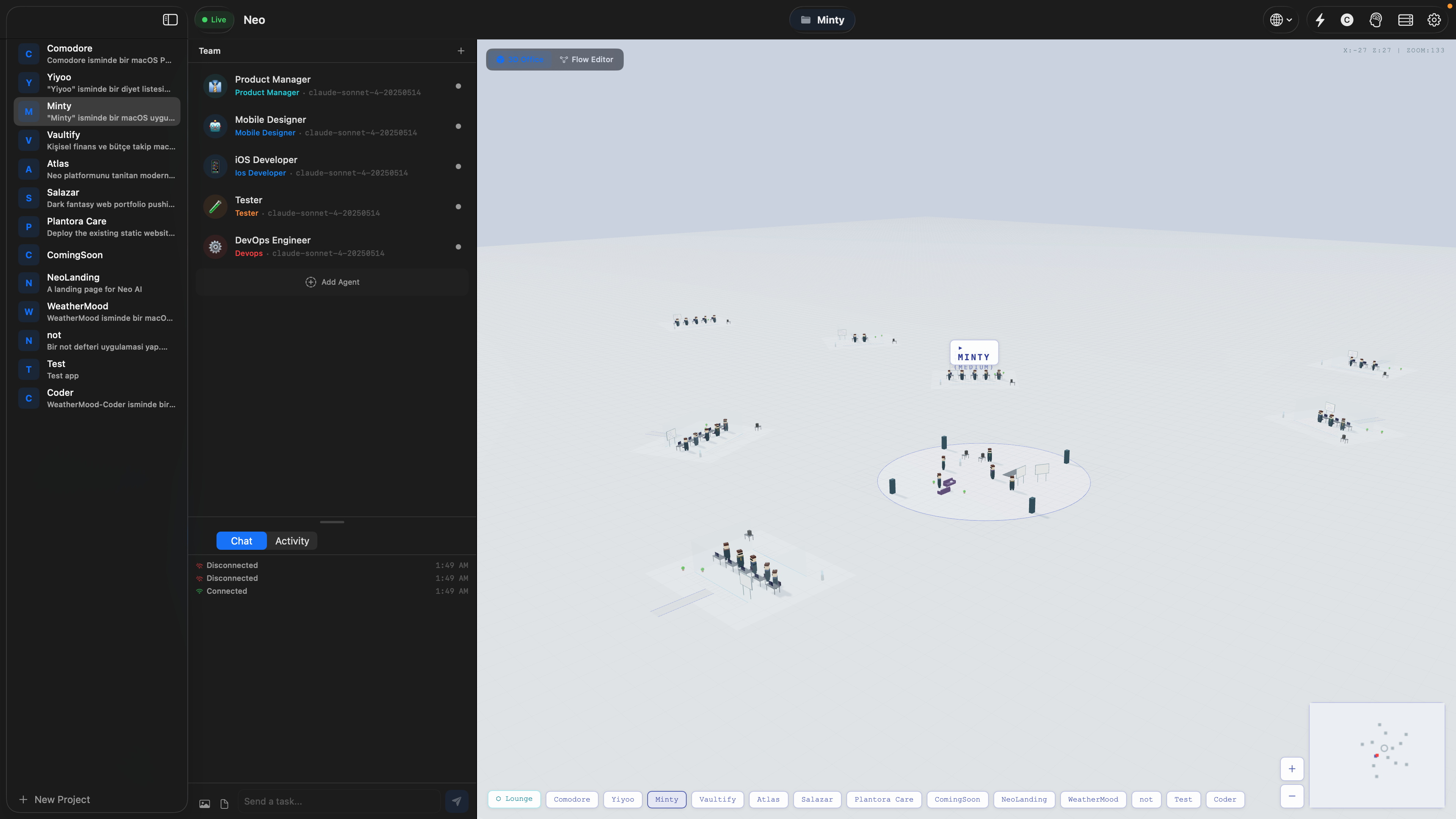

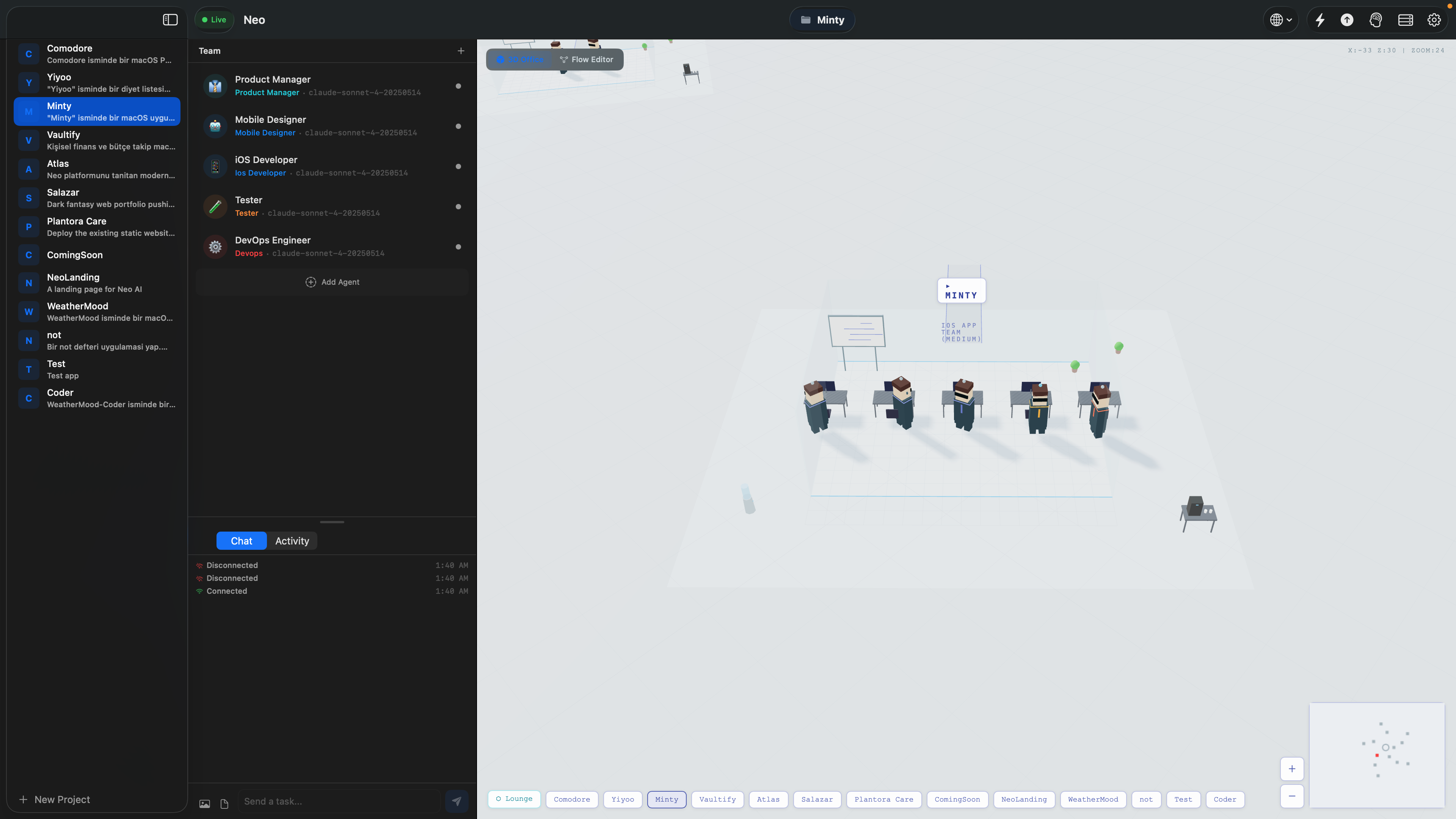

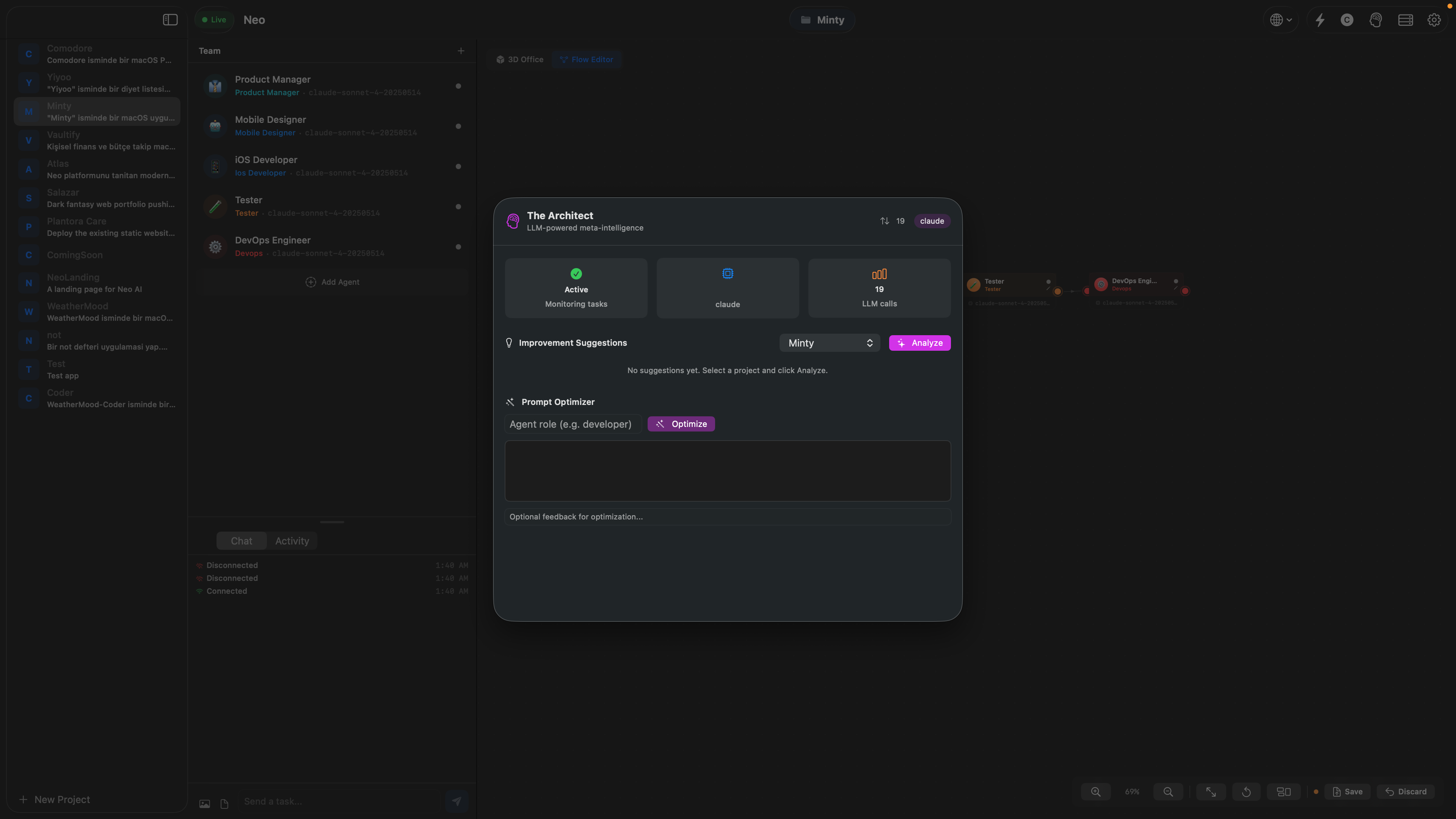

The Neo Experience

A native macOS app where AI agents build your software in real-time.

3D Agent Office

Watch your AI team collaborate in a virtual office environment

What You Can Build

From mobile apps to full-stack platforms. If you can describe it, Neo can build it.

iOS & macOS Apps

SwiftUI · UIKitProduction-ready native apps with StoreKit subscriptions, push notifications, and App Store deployment

Web Applications

Next.js · React · VueFull-stack web apps with auth, payments, real-time features, and one-click deploy to Vercel or Netlify

Android Apps

Compose · FlutterNative Android or cross-platform apps with Google Play deployment and Firebase integration

Games

SpriteKit · Unity · Unreal2D and 3D games with physics, multiplayer, and achievements. From casual to AAA quality

APIs & Microservices

FastAPI · Express · DjangoProduction REST/GraphQL APIs with auth, rate limiting, OpenAPI docs, and serverless deployment

AI/ML Pipelines

Python · TensorFlow · PyTorchEnd-to-end ML pipelines with data processing, model training, and cloud deployment on AWS/GCP

Why Neo is Different

Other tools help you write code. Neo builds entire applications.

| Feature | Neo | Claude Code | Cursor | Windsurf | Bolt.new | Lovable | v0 | Replit | Devin | AI Studio |

|---|---|---|---|---|---|---|---|---|---|---|

| Full app from prompt | ✓ | Manual | ✗ | ✗ | ✓ | ✓ | ✗ | ✓ | ✓ | Partial |

| Native iOS/macOS | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ |

| Native Android | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ |

| Game Development | ✓ | ✗ | ✗ | ✗ | Partial | ✗ | ✗ | Partial | ✗ | ✗ |

| Multi-agent team | 62 | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | Single | ✗ |

| Auto code review | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | Partial | ✗ |

| One-click deploy | ✓ | ✗ | ✗ | ✗ | Partial | ✓ | ✗ | ✓ | Partial | ✗ |

| Visual Kanban | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ |

| Local/offline models | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ |

| BYOK free tier | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ |

| CLI tool | ✓ | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ |

| Distributed workers | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ |

| Open source | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ |

vs Claude Code

Anthropic's CLI coding agent. Terminal-based, single project, code editing + terminal commands.

Neo provides a full multi-agent platform with deploy, kanban, and native app generation. Not just a code editor.

vs Cursor

AI-powered code editor (VS Code fork). Multi-file editing, codebase-aware.

Cursor is an editor. Neo is an autonomous platform that builds, tests, reviews, and deploys entire apps.

vs Bolt.new

In-browser full-stack web app builder by StackBlitz. Uses WebContainers for fast iteration.

Bolt is web-only. Neo builds native iOS, Android, macOS, games, and web with multi-agent teams.

vs Lovable

Full-stack web apps with Supabase backend. Good design quality, formerly GPT Engineer.

Lovable is web-only. Neo supports all platforms, local models, and distributed computing.

vs Devin

Autonomous AI engineer by Cognition. Plans and codes in a sandbox environment.

Devin costs $500/mo and takes hours per task. Neo builds in minutes with 62 agents across 42 team configurations and full native app support.

Build Anywhere. Deploy Everywhere.

13 platform integrations. From code to production in one workflow. Your agents handle the infrastructure.

Build For

Deploy To

Backend & Database

AI Models

From Prompt to Production

One sentence becomes a complete application. Neo orchestrates 62 specialized agents through a multi-phase pipeline.

Every Device is a Worker

Connect any device to the Neo mesh. MacBooks, iPhones, iPads, Windows PCs, Linux servers, cloud GPUs. Each one becomes an AI compute node.

Worker Mode

Any device connects to the Neo orchestrator via WebSocket, receives tasks, runs LLM inference locally, and returns results.

Commander Mode

Full project management from any device. Create projects, manage teams, send tasks, monitor progress, and deploy.

Multi-Agent Compute

Powerful servers host multiple agents simultaneously. A Mac Studio or GPU cluster becomes a full multi-agent node.

Native Clients: macOS (SwiftUI + SceneKit 3D), iOS (Commander + Worker), Go CLI with 30 commands. All can act as worker nodes.

Built With Neo

Real projects designed, coded, and deployed entirely by Neo's AI agents.

Be the First to Know

Neo launches soon. Join the waitlist for early access.

No spam. Unsubscribe anytime.